Autonomous Vehicle Decision-Making and Control

自动驾驶汽车决策与控制

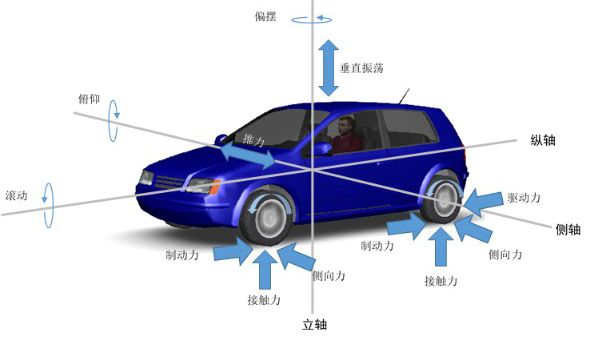

车辆行为预测:车辆的行驶轨迹是两个因素共同作用的结果,首先是车辆驾驶员的行为,例如反应意图的换道过程;其次是外部环境因素,例如在行驶期间影响车辆轨迹的激发偶同信息(如交通红绿灯)等。

预测方法:由此衍生出多种不同的轨迹预测思路。 包括有:基于物理魔性的轨迹预测,基于行为模型的轨迹预测,基于神经网络的轨迹预测方法,基于交互的预测方法,多种途径相结合的方法,基于仿生学的轨迹预测,基于物理模型的估计预测。

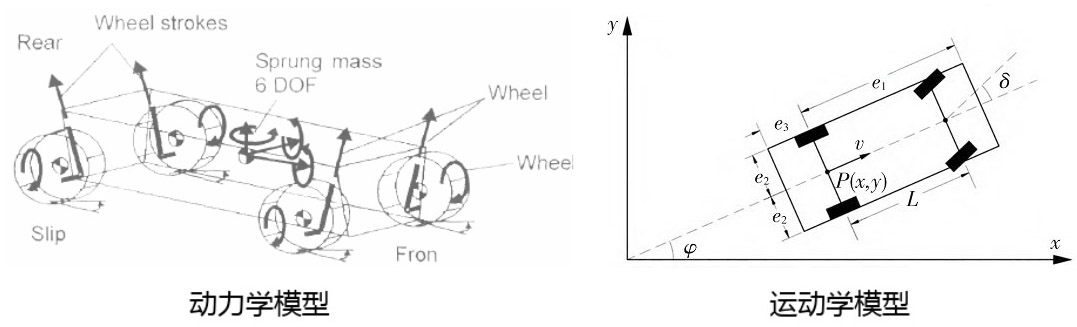

基于物流模型的轨迹预测:将车辆表示为受物理定律支配的动态实体。使用动力学和运动学模型预测未来运动。

使用动力学和运动学预测未来运动,将一些控制输入(如:转向,加速度),汽车属性(如:重量)和外部条件(如:路面的摩擦系数)与车辆状态的演变(如:位置)联系起来。

基于物理建模的轨迹预测方法:单轨迹模拟预测,卡尔曼滤波算法(Extended Kalman Filter 【EKF】),蒙特.卡洛方法(Monte Carlo Method)。

1. 自动驾驶汽车决策与控制 清华大学出版社